European lawmakers pass AI Act, world’s first comprehensive AI law

EU law is the first comprehensive set of rules for artificial intelligence, including new restrictions on how it can be used

We are still in the earliest days of the artificial intelligence revolution: Peter Diamandis

XPRIZE Foundation founder and executive chairman Peter Diamandis discusses the pros and cons of A.I. on The Claman Countdown.

European lawmakers approved the world’s most comprehensive legislation yet on artificial intelligence, setting out sweeping rules for developers of AI systems and new restrictions on how the technology can be used.

The European Parliament on Wednesday voted to give final approval to the law after reaching a political agreement last December with European Union member states. The rules, which are set to take effect gradually over several years, ban certain AI uses, introduce new transparency rules and require risk assessments for AI systems that are deemed high-risk.

Members of the European Parliament take part in a voting session during a plenary session at the European Parliament in Strasbourg, eastern France, on March 13, 2024. (FREDERICK FLORIN/AFP via Getty Images / Getty Images)

The law comes amid a global debate about the future of AI and its potential risks and benefits as the technology is increasingly adopted by companies and consumers. Elon Musk recently sued OpenAI and its chief executive, Sam Altman, for allegedly breaking the company’s founding agreement by giving priority to profit over AI’s benefits for humanity. Altman has said AI should be developed with great caution and offers immense commercial possibilities.

The new legislation applies to AI products in the EU market, regardless of where they were developed. It is backed by fines of up to 7% of a company’s worldwide revenue.

ELON MUSK PREDICTS AI WILL LIKELY BE SMARTER THAN ‘ALL HUMANS COMBINED’ BY 2029

The AI Act is "the first regulation in the world that is putting a clear path towards a safe and human-centric development of AI," said Brando Benifei, an EU lawmaker from Italy who helped lead negotiations on the law.

The law still needs final approval from EU member states, but that process is expected to be a formality since they already gave the legislation their political endorsement.

While the law only applies in the EU, it is expected to have a global impact because large AI companies are unlikely to want to forgo access to the bloc, which has a population of about 448 million. Other jurisdictions could also use the new law as a model for their AI regulations, contributing to a ripple effect.

"Anybody that intends to produce or use an AI tool will have to go through that rulebook," said Guillaume Couneson, a partner at law firm Linklaters.

Several jurisdictions worldwide have introduced or are considering new rules for AI. The Biden administration last year signed an executive order requiring major AI companies to notify the government when developing a model that could pose serious risks. Chinese regulators have set out rules focused on generative AI.

Vice President Kamala Harris introduces President Joe Biden during an event about their administration's work to regulate artificial intelligence in the East Room of the White House on October 30, 2023 in Washington, DC. (Getty Images)

The EU’s AI Act is the latest example of the bloc’s role as an influential global rule maker. A separate competition law that came into effect for certain tech giants earlier this month is pushing Apple to change its App Store policies and Alphabet’s Google to modify how search results appear for users in the bloc. Another law focused on online content is pushing large social-media companies to report on what they are doing to address illegal content and disinformation on their platforms.

| Ticker | Security | Last | Change | Change % |

|---|---|---|---|---|

| AAPL | APPLE INC. | 278.12 | +2.21 | +0.80% |

| GOOG | ALPHABET INC. | 323.10 | -8.23 | -2.48% |

The AI Act won’t take effect right away. The prohibitions in the legislation, which include bans on the use of emotion-recognition AI in schools and workplaces and on untargeted scraping of images for facial-recognition databases, are expected to become enforceable later this year. Other obligations are expected to kick in gradually between next year and 2027.

The new rules will eventually require providers of general-purpose AI models, which are trained on vast data sets and underpin more specialized AI applications, to have up-to-date technical documentation on their models. They will also have to publish a summary of the content they used to train the model.

FORMER GOOGLE CONSULTANT SAYS GEMINI IS WHAT HAPPENS WHEN AI COMPANIES GO ‘TOO BIG TOO SOON’

Makers of the most powerful AI models—deemed to have what the EU calls a "systemic risk"—will be required to put those models through state of the art safety evaluations and notify regulators of serious incidents that occur with their models. They will also have to implement mitigations for potential risks and cybersecurity protection, the law says.

The bloc’s initial proposal for the legislation was published in 2021, before the widespread popularity of OpenAI’s ChatGPT and other AI-powered chatbots, and provisions on general-purpose AI were added during the legislative process.

European legislators began drafting the EU's new AI law prior to ChatGPT and other AI chatbots became popular. (Nicolas Economou/NurPhoto via Getty Images / Getty Images)

Industry groups and some European governments pushed back against the introduction of blanket rules for general-purpose AI, saying legislators should focus on risky uses of the technology—rather than the models that underpin its use.

France, home to Mistral AI, and Germany sought to water down some of the legislation’s proposals. Mistral Chief Executive Arthur Mensch said recently that the AI Act would—after some changes in final negotiations that lightened some obligations—be a manageable burden for his company, even if he thinks the law should have remained focused on how AI is used and not the underlying technology.

Lawmakers said the AI Act was among the most heavily lobbied pieces of legislation the bloc has dealt with in recent years.

US-FUNDED REPORT ISSUES URGENT AI WARNING OF ‘UNCONTROLLABLE’ SYSTEMS TURNING ON HUMANS

Corporate watchdogs and some lawmakers said they wanted the legislation to include tougher requirements—such as the rules for safety evaluations and risk mitigation—for all general-purpose AI models and not just the most powerful models.

Lobby group BusinessEurope said Wednesday that it supports the law’s risk-based approach to regulating AI, although it said there are questions about how the law will be interpreted in practice. Digital-rights group Access Now said the final text of the legislation was full of loopholes and failed to adequately protect people from some of the most dangerous uses of AI.

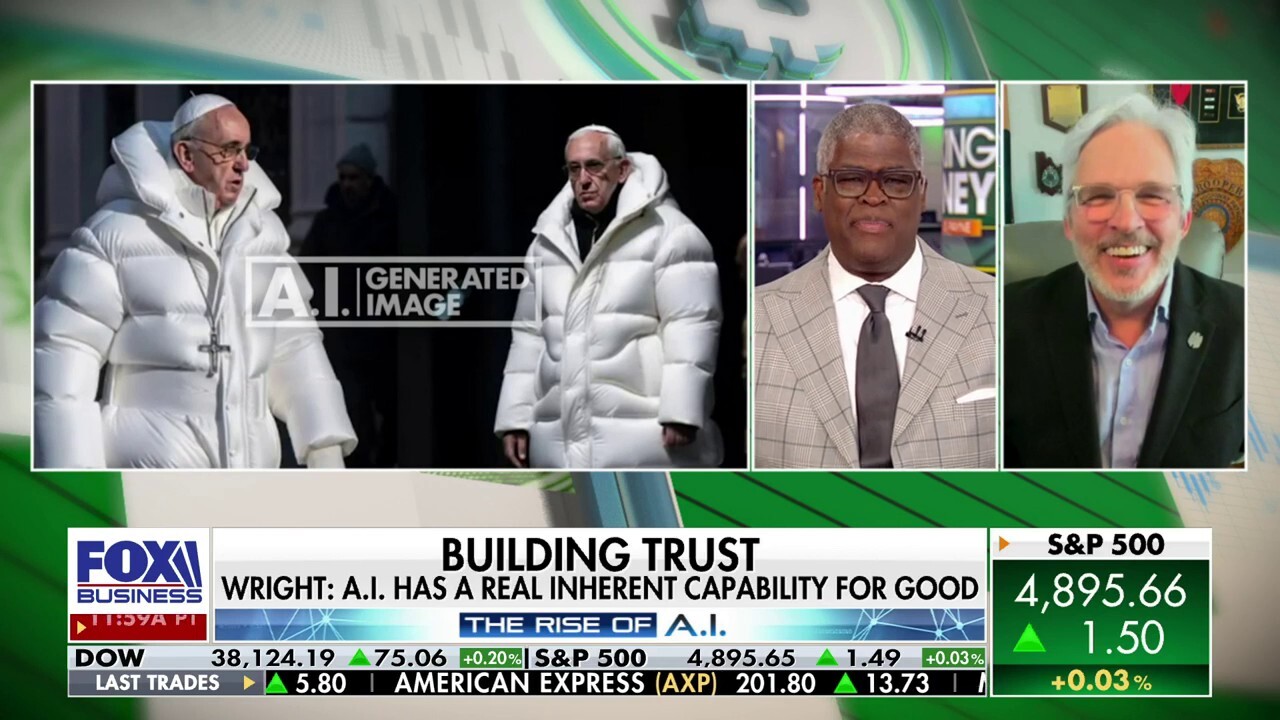

AI regulations could limit US’s ability to compete globally: Morgan Wright

SentinelOne chief security advisor Morgan Wright joins ‘Making Money’ to discuss AI regulation talks, saying if a human is ‘in the loop’ with AI, it can be used for good.

Another element of the law requires clear labeling of so-called deepfakes, which refer to images, audio, or video that have been generated or manipulated by AI and might otherwise appear to be authentic. AI systems that are deemed by legislators to be high-risk, such as those used for immigration or critical infrastructure, must conduct risk assessments and ensure that they are using high-quality data, among other requirements.

GET FOX BUSINESS ON THE GO BY CLICKING HERE

Lawmakers in Europe said they sought to make the legislation flexible so that it can adapt to rapidly evolving technology. For example, one part of the law says the European Commission—the EU’s executive arm—can update technical elements of its definition of general-purpose AI models based on market and technological developments.